I presented at the 2017 NSW Secondary Deputy Principals Association Conference this week on embedding effective questioning into assessment for learning. According to research, teachers ask 400 questions a day, wait under 1 second for a reply from students and most of these questions are lower order questions that require students to recall facts. The research also shows that increasing the number of higher order questions leads to increases in on-task behaviour, better responses from students and more speculative thinking from students.

There are other reasons why teachers ask question, like asking a question to wake up the student daydreaming at the back of the class, or asking students to repeat instructions to an activity to make sure they know what to do. These are fine, as long as teachers know the reasons for those questions (and these types of questions do not dominate the majority of class time).

Strategic questioning is key to assessment for learning. While questioning is essential for students in all grade levels, teachers can take the opportunity of new syllabuses and school based assessment requirements for the HSC to re-think how they design and implement assessment for learning in Stage 6. However, questioning is often viewed as an intuitive skill, something that teachers “just do”. At a time when many teachers are creating new units of work and resources for the new Stage 6 syllabuses, it may be a good opportunity to look at strategic questioning and embed some quality questions and questioning techniques.

What do good questions look like?

For assessment for learning, there are two main reasons why teachers ask questions:

- To gather evidence for learning to inform the next step in teaching

- To make students think

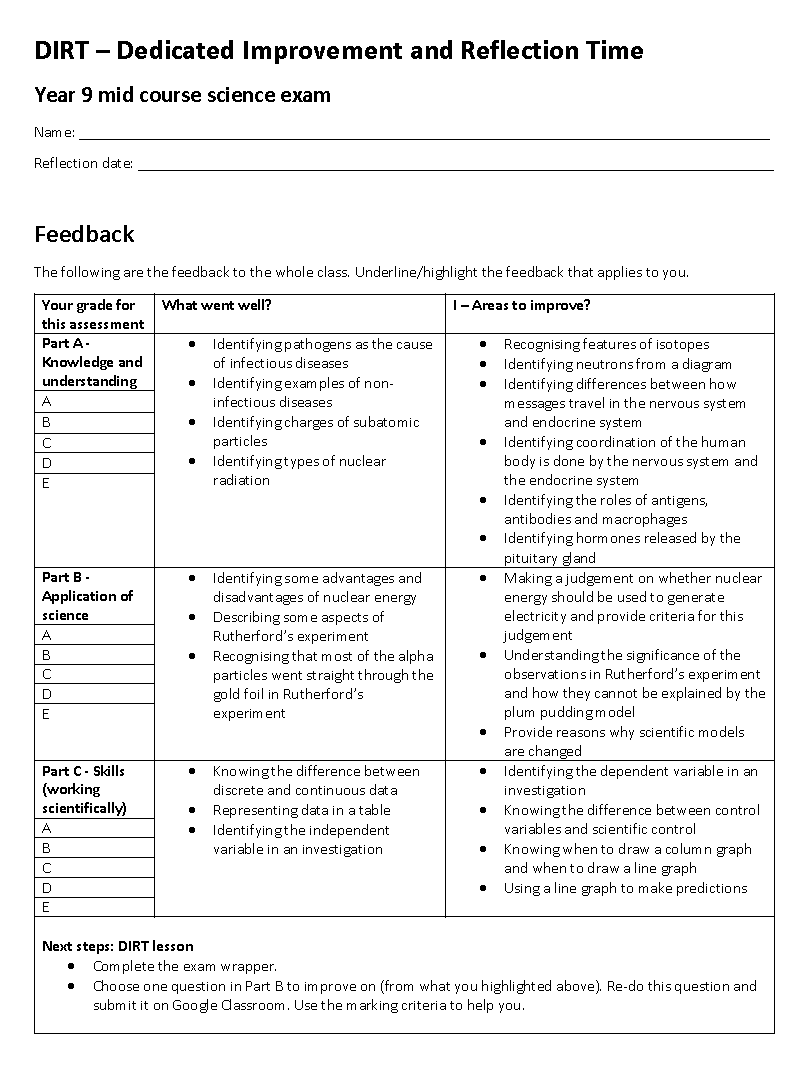

For these questions to be effective, it depends on how the question itself is designed, how the question is asked, and how response collected and analysed, to inform the next step in teaching and learning. Here are some strategies:

Hinge questions

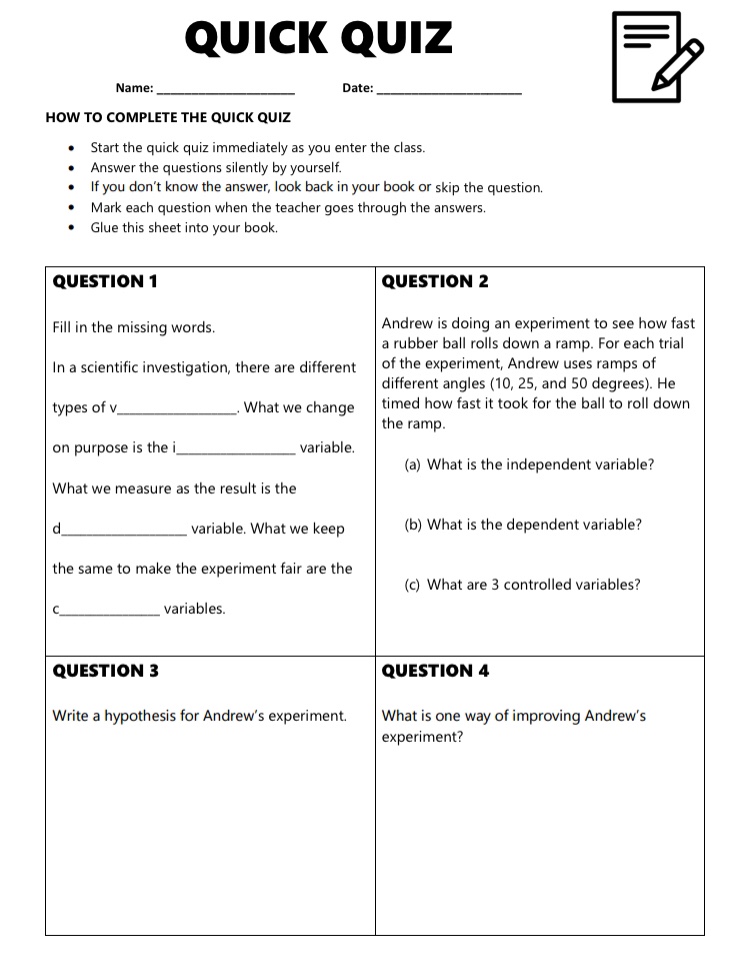

Hinge questions are often multiple choice questions (they don’t have to be). They are asked by the teacher to the class towards the middle of the lesson for the teacher to decide whether the class has understood the critical concepts of the lesson to move on. Hinge questions have four essential components:

- The question is based on a critical concept for that lesson that students must understand.

- Every student must respond to the question.

- The teacher is able to collect every student’s response and interpret the responses in under 30 seconds. (This is why many hinge questions are multiple choice).

- Prior to the lesson, the teacher must have decided what the teaching and learning that follows for:

- the students who have answered correctly

- the students who have answered incorrectly

Here is an example of a hinge question:

The question assesses students’ understanding of validity, reliability and accuracy in scientific investigations. Many students confuse the 3 concepts. This hinge question can be used for a lesson on investigation design where validity, reliability and accuracy have been explained. Towards the end of this explanation (typically around the middle of the lesson), this question can be asked to all students. Then the teacher can decide on the next steps for students who “get it” and those who don’t. For this question, the correct answer (key) is B. Note that the wrong answers (distractors) in a hinge question must be plausible so students do not answer correctly with the wrong thinking. A really, really good hinge question would have distractors where each distractor reveals a misconception.

Here is another example of a hinge question from Education Scotland.

For this question, the key is B. The annotated blue boxes show the wrong thinking behind each distractor.

So how do you implement hinge questions? How do you ask them so that every student responds and you can collect and interpret their responses, and decide the next step in under 30 seconds?

No hands up

The first thing to do is to create a class culture of “No Hands Up”. Students can only put up their hands to ask questions, not to answer questions. Either everyone answers or the teacher selects who answers. When the teacher selects who answers, it must be done in a random way so that everyone is accountable to answering the question. This ensures that it is not just the “Lisa Simpsons” or the daydreaming student who answers the questions. For this to happen, teachers can use mini whiteboards and a randomisation method.

Mini whiteboards can be purchased or cheaply made by laminating pieces of white paper. For hinge questions, students write down their response (A, B, C, D, etc) and holds up their whiteboard for the teacher to see when the teacher says so. This allows the teacher to scan every board (so every student’s response) to see approximately how many students have understood the critical concept. The teacher can then decide what activities they can do while intervening for those students who do not understand. The key to hinge questions is to intervene during the lesson.

As Dylan Wiliam says,

It means that you can find out what’s going wrong with students’ learning … If you don’t have this opportunity, then you’ll have to wait until you grade their work. And then, long after the students have left the classroom.

Alternatively, you can use digital tools like Plickers, Kahoot and Mentimeter. I personally find mini whiteboards the easiest to implement.

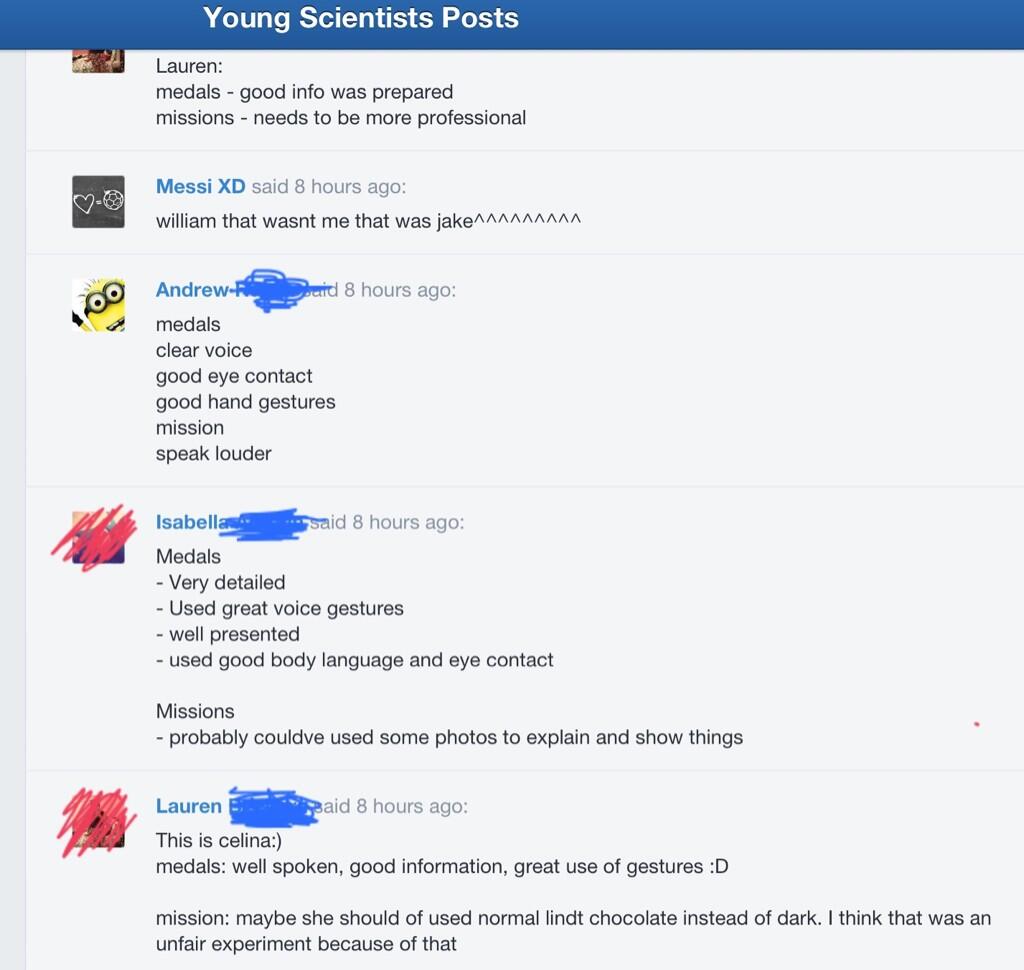

While hinge questions require everyone to respond, other questions are more suited to randomly selecting a student to respond. Teachers can use these strategies:

- Digital random name generator from tools like Classtools and Class Dojo.

- Writing each student’s name on paddle pop sticks and selecting a stick out of a cup

Higher order questions

Selecting a student at random to answer is more suited to higher order questions. the key is to create and pre-plan higher order questions to take to class to avoid asking too many lower order questions. To plan a sequence of low order to higher order questions, there are numerous strategies. There are heaps of resources for using Bloom’s question stems (just Google it). The strategy I find less popular, but more accessible to students, is the Wiederhold question matrix.

Questions are created by combining a column heading with a row heading. Eg. What is …. , Where did … , How might ….

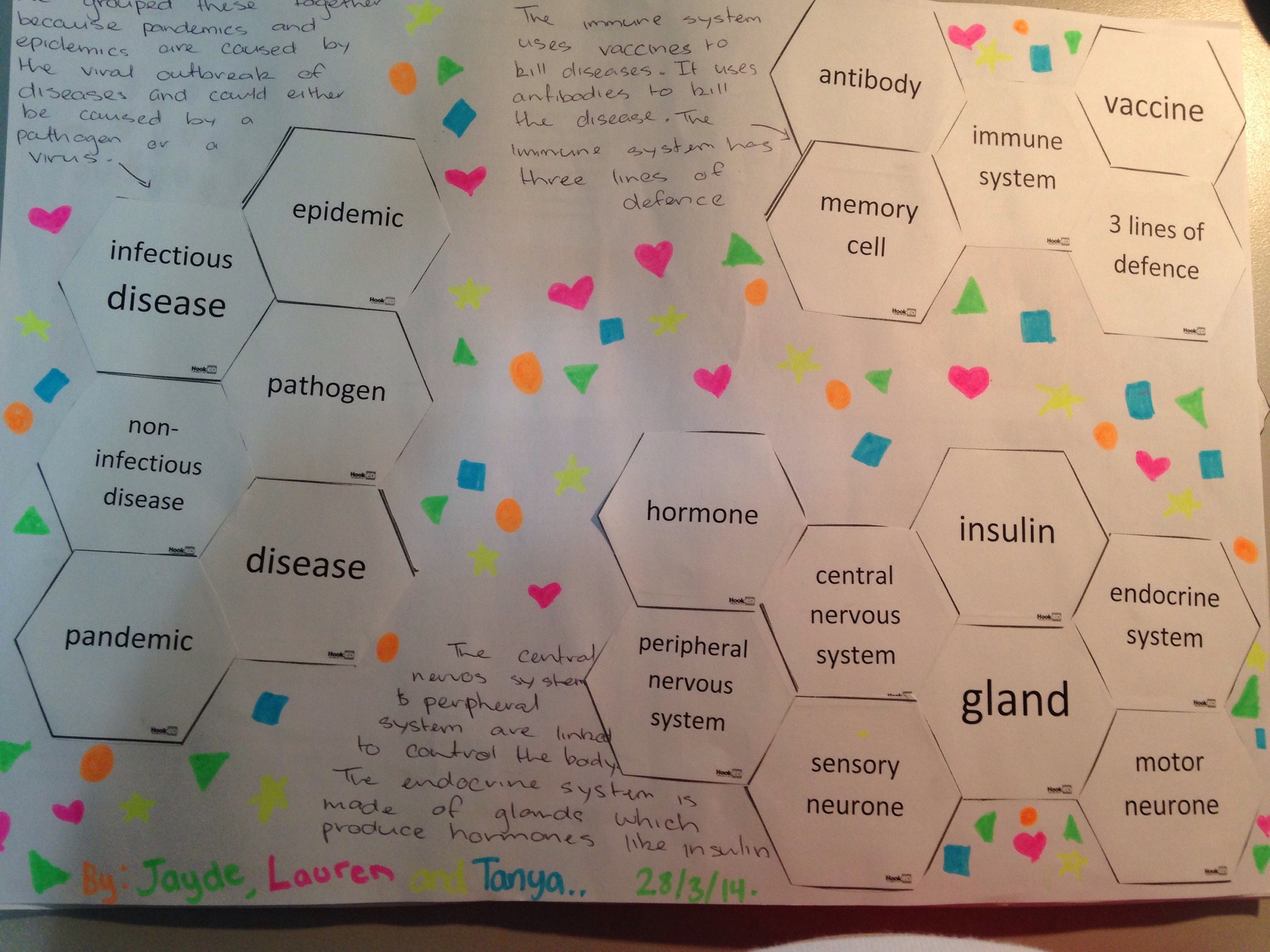

Teachers can put a stimulus in the middle of the table for students to create their own question, like this source I found via Kate Littlejohn for Stage 6 Modern History.

Some sample questions include:

- What is an ally? What is an opponent?

- Who decides who is an ally and who is an opponent?

- What is WWI? Where did it happen?

- Why did WWI happen?

- How would you decide who paid the highest price in WWI? What criteria would you use?

- How might the numbers in each category compare if a world war happened today?

Both hinge questions and creating a sequence of questions are not easy. It is worthwhile for teachers to look at building a bank of hinge questions and higher order questions as they collaboratively create units of work and resources.

You can find more information and resources on questioning in assessment for learning here.

Wait, wait and wait

Lastly, regardless of what questions you are asking (hinge, higher order questions, questions to wake up students), remind yourself to wait. Wait at least 3 seconds for lower order questions and more than 3 seconds for higher order questions; the longer the better.

Potential of hinge questions in flipped learning

As an interesting note, I think hinge questions can be very useful in flipped learning. The hinge questions can be asked at the start of the lesson to assess who has understood the concept from the instructional videos and who hasn’t so the teacher can decide on how the rest of the lesson should run. Hinge questions can also be incorporated into the instructional video at key points so that the video continues in a certain way if students answer correctly and in another way if students answer incorrectly.